Large Behavior Models: Dexterous Manipulation

Axis Lab

This video explores the mechanisms behind large behavior models (LBMs) and their impact on robot dexterity. It delves into how pre-training enhances manipulation capabilities, using diffusion policies and multitask learning.

Key highlights:

- Benefits of pre-training for dexterous manipulation.

- Comparison of single-task vs. multi-task learning approaches.

- Examples of complex robotic tasks like apple cutting.

- Discussion on achieving robustness in robotic policies.

内容摘要

核心要点

- 1Large Behavior Models (LBMs) show promise in enhancing robot dexterity, enabling complex manipulation tasks previously unattainable.

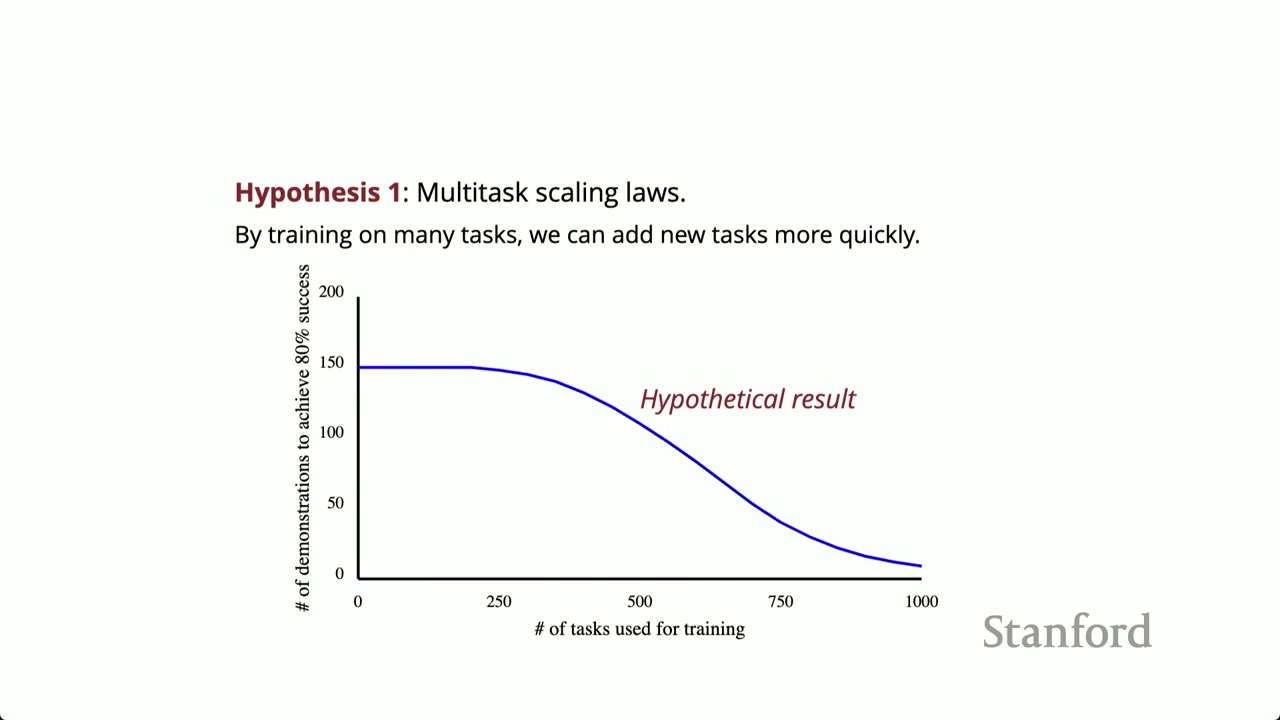

- 2Pre-training LBMs on diverse datasets can improve performance and robustness compared to single-task training, but requires careful evaluation.

- 3Rigorous A/B testing and randomized trials are crucial for accurately evaluating policy performance and avoiding statistical biases.

- 4Simulation-based testing is essential for efficient policy evaluation, offering repeatability and scalability despite potential sim-to-real gaps.

- 5Data normalization and other engineering details can significantly impact performance, often more so than architectural changes.

- 6The choice of tasks and initial conditions significantly affects the evaluation of robot policies, necessitating careful consideration of task difficulty and diversity.

- 7Statistical rigor is paramount when comparing different robot policies, requiring sufficient rollouts and awareness of potential biases like p-hacking.

演示预览

幻灯片内容

The lecture introduces the concept of Large Behavior Models (LBMs) and their application in robotics, particularly for dexterous manipulation. It highlights the scaling hypothesis and the need to understand the underlying mechanisms driving the performance of complex control systems. The speaker emphasizes the importance of rigorous evaluation and scientific inquiry to validate the effectiveness of LBMs.

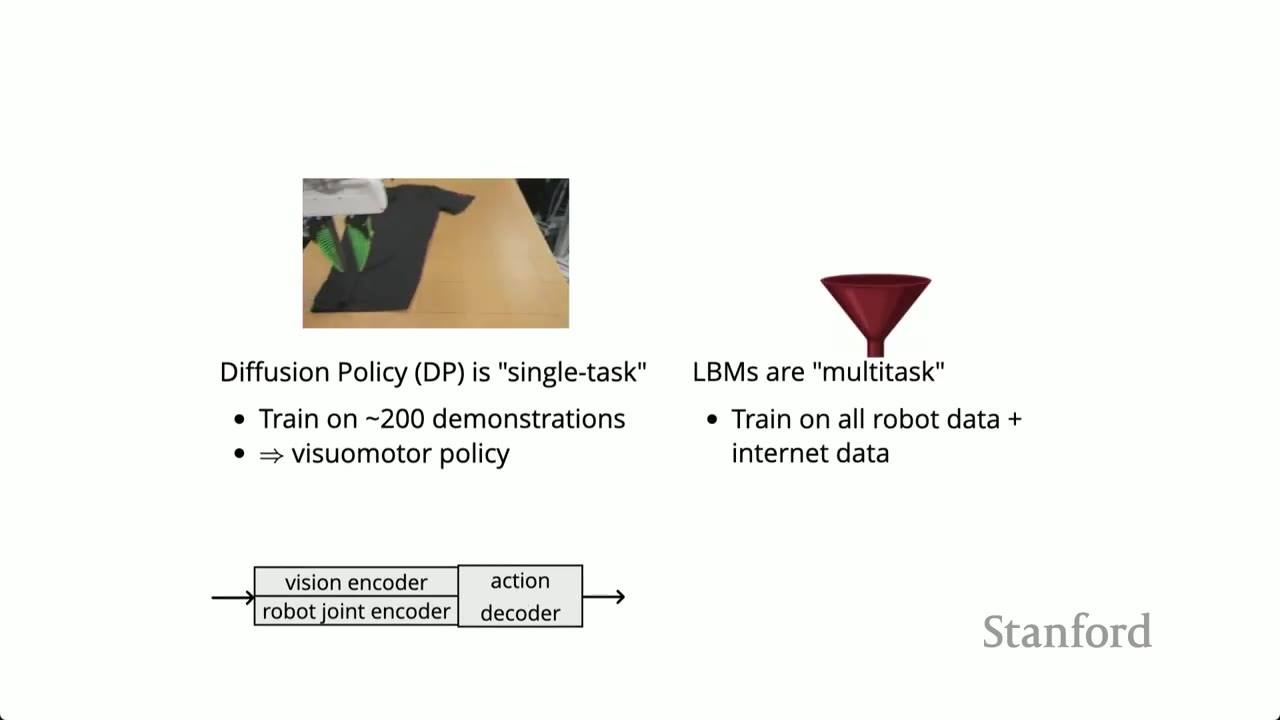

Diffusion policy is presented as a single-task imitation learning pipeline, where robots are trained to perform specific tasks through teleoperation. LBMs are introduced as the multi-task version, leveraging a broader range of data, including robot training data, simulation data, and online data, to create language-conditioned visual motor policies.

The potential of LBMs is highlighted, including the ability of robots to perform tasks involving cloth, liquids, and other complex manipulations. The lecture emphasizes the potential for programming robots via imprecise natural language and the development of common sense for physical intelligence, leading to more robust policies.

A side-by-side comparison is presented, contrasting a single-task diffusion policy with an LBM fine-tuned on the same task. The example demonstrates the robot making breakfast, showcasing the improved performance and robustness of the pre-trained LBM.

A more complex task, apple cutting, is presented to further illustrate the capabilities of LBMs. The robot demonstrates fine motor skills and recovery maneuvers, highlighting the potential for robustness in pre-trained models. Safety measures are emphasized due to the use of a knife.

The core question is posed: Does pre-training genuinely improve the quality of dexterous manipulation? The speaker emphasizes the need for evidence-based validation and the importance of building the right tools to make informed design decisions when iterating over policy architectures and datasets.