Language Models Explained: From Basics to Applications

Stanford Online

This video explains language models, from their basic principles to their applications in various domains.

Key highlights:

- Overview of language models and their training process.

- Common limitations of language models and methods to improve them.

- Explanation of car agent language models and their unique features.

- How to use language models via API calls and prompting strategies.

- Best practices for prompting, including clear instructions and relevant context.

概要

重要ポイント

- 1Language models predict the next word in a sequence based on training data, enabling text generation and completion.

- 2Pre-training and post-training are essential stages in developing usable and instruction-following language models.

- 3Prompt engineering is critical for effective LLM usage, requiring clear instructions, examples, and context.

- 4Retrieval-augmented generation (RAG) enhances LLMs by incorporating external knowledge sources to reduce hallucinations.

- 5Agentic language models can interact with their environment, reason, and take actions to perform complex tasks.

- 6Design patterns like planning, reflection, and tool usage improve the performance and capabilities of agentic LLMs.

- 7Multi-agent collaboration allows for complex tasks to be divided and handled by specialized LLM agents.

ウォークスルー

スライド内容

The lecture introduces agentic AI and language models, outlining the topics to be covered: language model overview, limitations, improvement methods, and agentic language model design patterns.

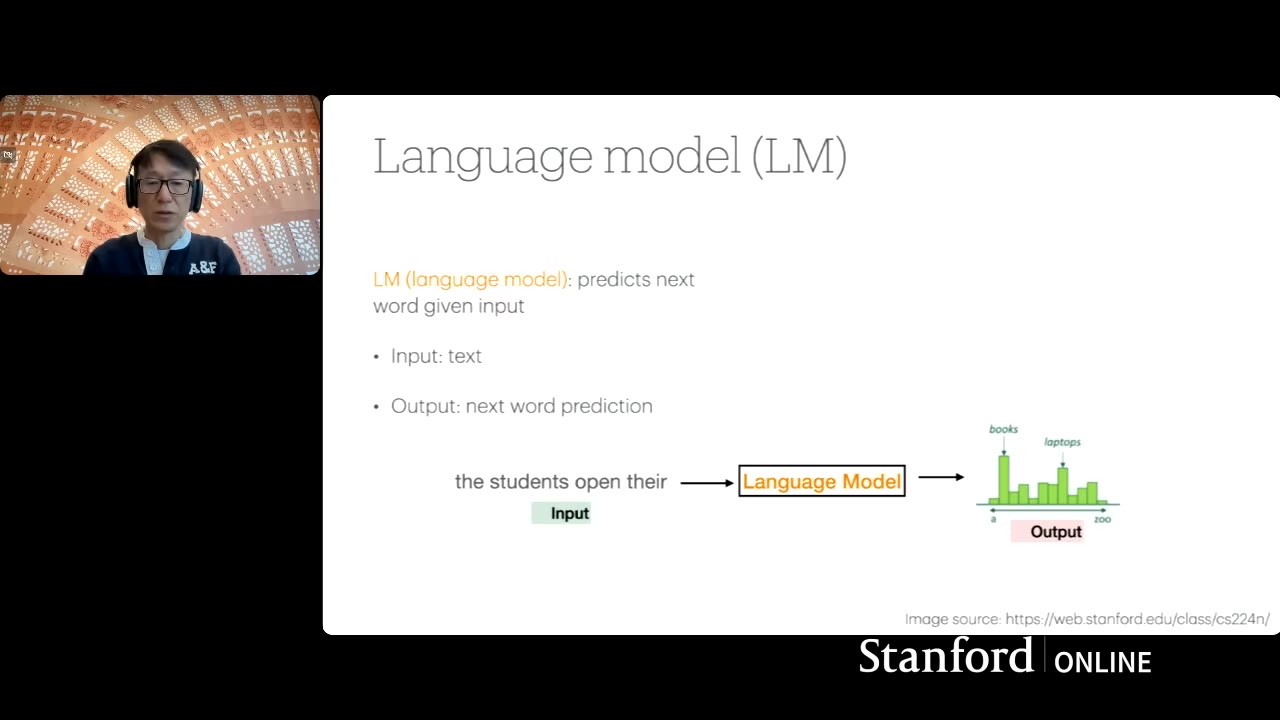

A language model predicts the next word given input text. Trained on large datasets, it generates probabilities for each word in the vocabulary, selecting the most likely completion. The process can be repeated to generate longer sequences.

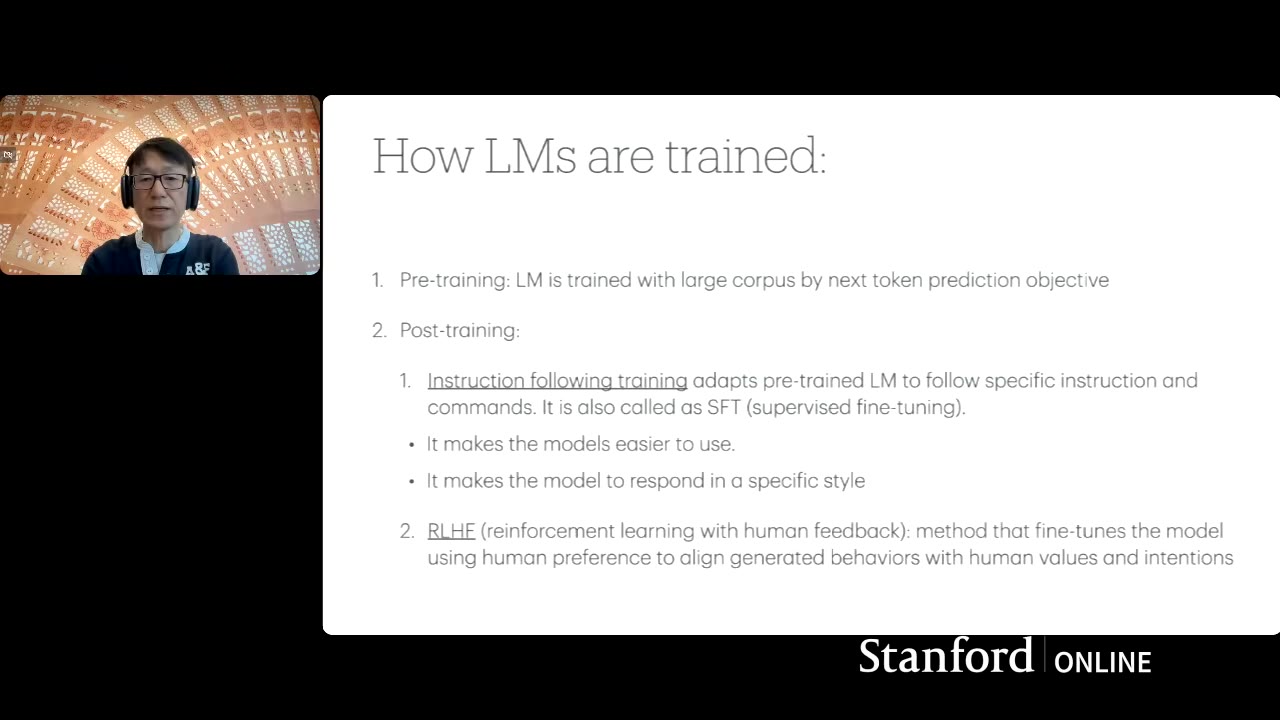

Training involves pre-training on large text corpora using next-word prediction objectives, followed by post-training with instruction following and reinforcement learning from human feedback to align the model with user expectations and preferences.

Instruction following training uses datasets with specific instructions and expected outputs. The model is trained to generate the output based on the given instructions, enabling it to respond to specific styles and questions.

Trained language models are capable of generating text given instructions and are used in various applications, including AI coding assistants, domain-specific AI copilots, and conversational interfaces like ChatGPT. They can be accessed via cloud-based APIs or hosted locally.

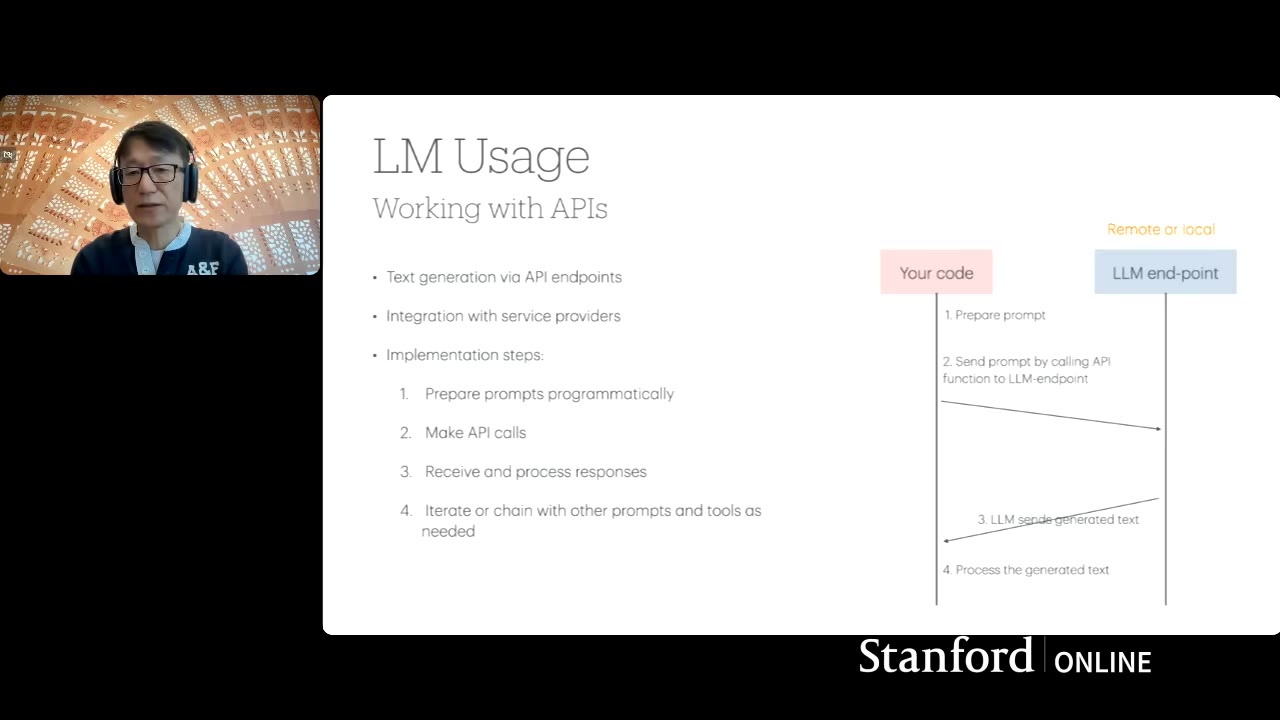

Using language models involves preparing natural language input (prompts) and making API calls to model providers. The model generates output, which is then parsed and used by the software. Effective prompt engineering is crucial for desired results.